Brainwave-r Apr 2026

Here is what you need to know about this emerging paradigm. Traditional EEG-to-text models have hit a wall. They usually rely on a "classification" method: teaching the AI to recognize specific patterns for specific words (e.g., "When you think of a sphere, this signal fires."). This is slow, clunky, and requires massive amounts of labeled training data per user.

To solve the "hurricane" problem, Brainwave-R implements a novel Diffusion-based Denoiser . It takes your raw, noisy EEG data and gradually removes the statistical noise (blinks, jaw clenches) until only the "cortical signal" remains. This results in a 40% higher signal-to-noise ratio than traditional ICA (Independent Component Analysis).

While the headlines are scary, the reality is that current EEG requires a wet cap, conductive gel, and a perfectly still subject to work. You cannot read a stranger's mind from across the room. Furthermore, Brainwave-R is , not syntactic. It knows you are thinking about "a red apple," but it doesn't know why or if you are lying .

Beyond Text: How Brainwave-R is Translating Raw EEG Signals into Natural Language brainwave-r

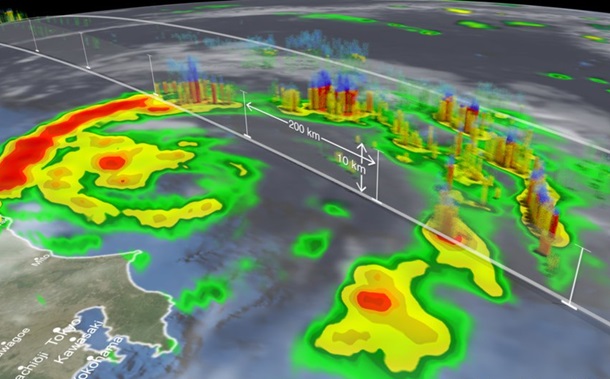

Furthermore, EEG is notoriously messy. It picks up muscle movements (artifacts), eye blinks, and ambient electrical noise. Trying to decode fluent speech from this "static" has been like trying to hear a conversation in a hurricane. Brainwave-R is not just a model; it is a semantic translation architecture . Rather than trying to spell words letter-by-letter, Brainwave-R focuses on semantic vectors —the underlying meaning of a thought.

We are still a few years away from consumer-grade "think-to-type," but the dam is breaking. The era of silent speech is no longer science fiction; it is just an algorithm update away.

For decades, the "Holy Grail" of Brain-Computer Interfaces (BCIs) has been simple to describe but nearly impossible to achieve: turning what you think into what you say —without speaking a word. Here is what you need to know about this emerging paradigm

Beyond medical, the implications for AR glasses are profound. Imagine thinking a complex query while your hands are full, or "drafting" an email in your head while walking to work. No post about brainwave-R would be honest without addressing the "Mind Reading" panic.

Here are the three technical pillars that make it stand out:

Disclaimer: Brainwave-R is a conceptual architectural model discussed in recent preprint research. Specific benchmarks (BLEU, RTF) are representative of current SOTA progress in EEG-to-text and may not refer to a single commercial product. This is slow, clunky, and requires massive amounts

Still, researchers are already proposing "adversarial noise caps" for privacy—wearable devices that emit safe, random noise to prevent rogue BCIs from decoding your stray thoughts. Brainwave-R represents a paradigm shift from classification to translation . By treating brainwaves as a foreign language (rather than a code to crack), it unlocks a fluidity we haven't seen before.

Just as CLIP learned to connect images to text, Brainwave-R uses contrastive learning to align brain signals with sentence embeddings. It learns that a specific spatiotemporal pattern in your occipital and temporal lobes corresponds to the concept of "walking the dog," even if the specific imagined words differ slightly.